If there’s one thing that’s clear from every conversation I’ve had recently – whether with customers, colleagues, or industry peers – it’s this: AI ambition has never been higher.

But ambition alone doesn’t equal readiness.

In our recent Data Integrity & AI Forum, I had the opportunity to sit down with Rabun Jones, CIO at C Spire; Andrew Brust, CEO of Blue Badge Insights; and Dave Shuman, Chief Data Officer at Precisely.

Together, we unpacked what it really means to be “AI ready” – and why so many organizations are struggling to turn that ambition into measurable results.

The discussion was grounded in findings from data and analytics leaders in the 2026 Data Integrity & AI Readiness report, published by Precisely in partnership with the Center for Applied AI and Business Analytics at Drexel University’s LeBow College of Business.

One consistent theme emerged: there’s a growing gap between how ready organizations think they are, and what it actually takes to succeed with AI at scale.

Let’s break down the biggest takeaways.

The AI Readiness Gap Is Real, and Growing

According to the report, 87% of organizations say they’re ready for AI. But at the same time, 40–43% cite infrastructure, skills, and data readiness as major blockers.

So, what’s the disconnect? As Andrew Brust put it:

“It’s hard for people to say no because that looks like they’re cynical about AI, and there’s so much pressure to be optimistic about it.” He went on to explain how there’s both external pressure and genuine excitement driving inflated confidence. But underneath that enthusiasm, many organizations haven’t fully accounted for the complexity of scaling AI.

Rabun Jones highlighted another key factor:

“I do think that some of it is a definition drift … what you were thinking about a year ago with AI or what it could do is very different than what you’re thinking about today.”

In other words, the goalposts are moving. What counted as “AI ready” a year ago – basic data access, some experimentation – is no longer enough. Today, readiness means:

- Governance at scale

- Secure deployment

- Repeatable outcomes

- Operational integration

Dave Shuman summed it up with a concept that resonated across the panel: altitude confusion.

“Organizations are evaluating readiness at the platform level: ‘Do we have the infrastructure provision? Do we have subscriptions to the appropriate LLMs?’ But the real test of readiness lives one floor down from that, at the operating model level.”

Dave also explored how many organizations are successfully piloting AI, but far fewer are scaling it. As he put it, “AI readiness isn’t experimentation. It’s about repeatability.”

That distinction matters. Experimentation allows for:

- Isolated use cases

- Limited risk

- Manual oversight

But repeatability requires:

- Data quality

- Governance

- Monitoring

- Cross-functional accountability

And most organizations aren’t there yet. Even more importantly, there’s often confusion between being ready to experiment and being ready for enterprise deployment. This is where many AI initiatives stall.

Key takeaway: Simply having the right tools in place doesn’t equate to AI readiness. You need a repeatable, governed operating model.

Governance Isn’t an AI Barrier. It’s an Accelerator.

Governance came up repeatedly in our discussion, and not in the way you might expect.

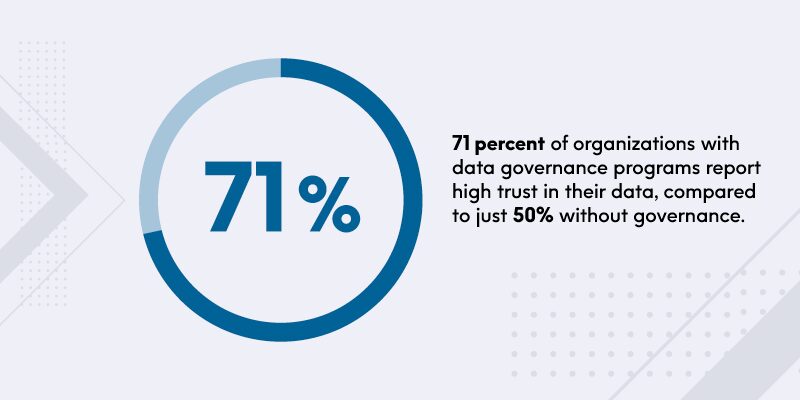

Too often, governance is seen as slowing things down. But the data tells a different story:

71% of organizations with governance programs report high trust in their data. Without governance, that number drops significantly.

Dave reframed governance in a way that stood out: “Governance shouldn’t be viewed as friction. It is traction.”

That’s a critical mindset shift. Strong governance:

- Builds trust

- Enables scale

- Reduces risk

- Accelerates adoption

Andrew added, “Governance doesn’t have to be the land of no … it should really eliminate the trust barriers that have blocked people from saying yes to AI.”

And importantly, the most successful organizations aren’t creating entirely new governance structures – they’re extending existing data governance into AI.

Why? Because splitting governance creates fragmentation:

- Conflicting definitions of trust

- Duplicate efforts

- Inconsistent controls

Key takeaway: The fastest path to trusted AI is building on what already works—your data governance foundation.

WEBINARThe Data Integrity & AI Forum: AI Excitement vs. Enterprise Reality

Designed for senior data and analytics leaders, this roundtable is an opportunity to compare notes, challenge assumptions, and explore what it truly takes to turn AI ambition into sustainable, trusted outcomes.

Data Quality Debt Is Catching Up – Fast

Another major insight from the report: 51% of data leaders say data quality is their top priority.

For years, organizations have carried “data quality debt” – issues that were manageable in traditional analytics environments. But AI changes the equation, and enhances the urgency around paying that bill.

As Andrew described it, “AI is like a big magnifying glass and a big spotlight.”

In the past, human analysts could spot inconsistencies, apply context, and compensate for flaws. AI doesn’t work that way. It scales both:

- Good data → better outcomes

- Bad data → amplified errors

Rabun made the stakes even clearer, saying that for the Agentic AI era in particular, “We’re going to move from insight to action … now it’s going to show up in actual bad actions that are taken against the wrong data.”

To mitigate the growing risk around bad data quality, leading organizations are moving from:

- Static quality checks → Continuous monitoring

- One-time fixes → Ongoing observability

- Manual processes → Automated controls

Key takeaway: The bill is now due for data quality debt. Data quality needs to be repositioned from a cleanup task into a continuous operating condition.

Proving AI Value Requires Discipline, Not Magic

One of the most striking findings from the report was that:

- 71% say AI aligns with business goals …

- But only 31% have metrics tied to KPIs

There’s a clear disconnect, and Andrew explained why:

“There’s an appeal of AI, that it’s so transformative that it makes us think it changes the rules around precision and the metrics that you measured. And the power of seeing that alleged magic kind of divorces us from … actually managing what you measure.”

AI certainly is transformative, but that doesn’t remove the need for clear success metrics, financial accountability, and outcome-based measurement.

Dave outlined three things that separate successful organizations. They:

- Define success – in business outcomes – before they start

- Resist temptations to keep things “safe” in pilot – and move into production, where value is created

- Build an integrated data integrity operating model that brings together data quality, governance, context, observability, skills, and business alignment

Rabun reinforced the importance of connecting everything back to value:

“It’s a maturity model. If you’re not already involved in that model of making that value chain connection of moving up data, the inference, all of these things – you need to be catching up to that quickly,” he says. “Because that’s how you make it work, and that’s how you get to the value. You invest at the at the foundational level … but then you take use cases where you can deploy up that full value chain.”

Key takeaway: AI success can’t just be measured in model performance – you need to define and measure real business impact.

AI Success Starts – and Ends – with Data Integrity

As we wrapped up the discussion, one theme stood above the rest: trusted AI starts with trusted data.

But it doesn’t stop there. To truly close the gap between AI ambition and execution, organizations need to:

- Move from experimentation to repeatability

- Treat governance as an accelerator, not a blocker

- Address data quality as an ongoing discipline

- Measure success in business terms

Because in the end, AI needs to be reliable, scalable, and actionable. And that’s where data integrity makes all the difference. Read our 2026 Data Integrity & AI Readiness report for more insights from data and analytics leaders worldwide, and hear more from our panel of experts in the full webinar, The Data Integrity & AI Forum: AI Excitement vs. Enterprise Reality.

FAQs: AI Readiness and Data Integrity

What is AI readiness?

AI readiness refers to an organization’s ability to successfully deploy, scale, and operationalize AI initiatives. It goes beyond having the right tools or infrastructure and includes data quality, governance, skills, and a repeatable operating model that delivers consistent business outcomes.

Why do many organizations struggle with AI readiness?

Many organizations overestimate their AI readiness due to strong enthusiasm and pressure to adopt AI. However, gaps in data quality, governance, infrastructure, and operational processes often prevent them from scaling beyond initial pilots into enterprise-wide deployment.

Why is data quality important for AI?

Data quality is critical for AI because AI systems amplify both good and bad data. High-quality data leads to more accurate and reliable outcomes, while poor data quality can result in incorrect insights or actions – especially in automated and agentic AI use cases.

How does data governance impact AI success?

Governance enables trusted AI by ensuring accountability, consistency, and control over data and models. Organizations with strong governance programs report higher trust in their data and are better positioned to scale AI initiatives with confidence.

How can organizations measure AI success?

Organizations can measure AI success by tying initiatives to business outcomes such as revenue impact, cost savings, or efficiency gains. Defining success metrics upfront and moving beyond pilot phases into production are key to demonstrating real ROI.