Data catalog solutions

Empower both business and technical teams with a dynamic data catalog to find, understand, and leverage your most valuable asset – your data.

Business-first data catalog

Imagine you visit a library to find a specific book. You have two approaches to consider:

Option 1 – start browsing the aisles until you find what you’re looking for.

Option 2 – use an online catalog to quickly find a specific book of interest, learn where the book is located, see what edition is on the shelf, and read a brief synopsis so you can be sure it contains the information you need.

For most people, the second option is the clear winner!

If you think of all the data in your organization as books in an expansive, digital library, having an organized inventory of assets is essential to finding and using them. Otherwise, without a way to locate the correct data and know its value, you’re left with a mountain (or lake) of meaningless information.

The solution?

You need a data catalog.

Historically, data catalogs have been built for the technical teams in an organization. They contained an index to physical metadata, such as location, schema, and runtime metrics of your data. These are all critical to a modern-day smart data catalog, with the 2025 Outlook: Data Integrity Trends and Insights survey reporting that 76% say data -driven decisions-making is their #1 goal for data programs, yet 67% don’t completely trust their data.

Increasingly, data catalogs are being used more broadly within the organization because they contain valuable intelligence that enables revenue growth, risk management, and cost control across the business. Data users and consumers are demanding native-language search capabilities, with advanced semantic tags to easily search, understand, and access data assets. They need data that is more accurate, consistent, and contextualized for business decisions based on data with maximum data integrity.

Resources

Create a dynamic data catalog for data-driven businesses

No longer a “nice to have”

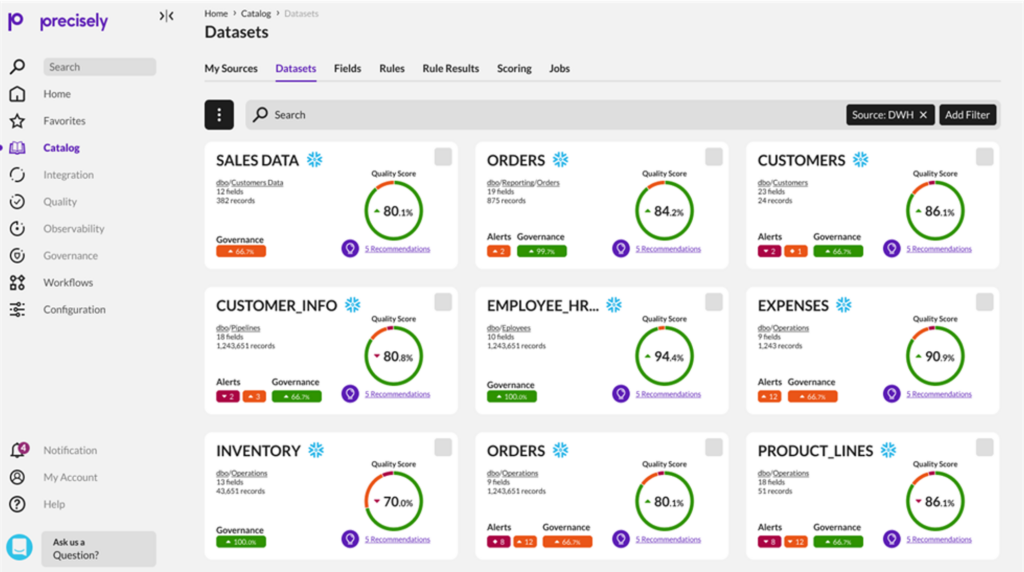

A data catalog provides context that enables users across the organization – from data stewards, to data engineers, data analysts, and other data consumers – to find and understand relevant data for the purpose of extracting business value.

Modern machine-learning-augmented data catalogs automate various tedious tasks involved in data cataloging, including metadata discovery, ingestion, translation, enrichment, and the creation of semantic relationships between metadata. Next-generation data catalogs will propel enterprise metadata management projects by allowing business users to participate in understanding, enriching, and using metadata to inform and further their data and analytics initiatives.

Data catalogs are no longer a “nice to have” but a “need to have.” Core components in Precisely’s business-first data catalog solution include:

- Business-friendly interface, metamodel, and search capabilities where both business and technical users can search, explore, understand, and collaborate around critical data assets.

- Artificial intelligence/machine learning (AI/ML) to identify and semantically tag assets for faster and more complete asset discovery.

- Visualizations to easily understand relationships, lineage, and impact analysis.

- Automated metadata harvesting to crawl, profile, and manage data to build and maintain a single searchable inventory of data assets.

- Workflow to monitor, audit, certify, and track data lifecycle and enable data stewards to manage data assets and share information.

- Collaborative functionality to enable sharing of tribal knowledge, comments, and surveys.

Data Driven Outcomes: It all starts with the Data Catalog

Resources

Expand the value of your data catalog with data governance

Transform your data with data governance

A data catalog and data governance have different definitions, but they share a common goal: to empower all data users to discover, leverage, and trust data assets.

A data catalog is a core component of data governance. But a data catalog without a solid data governance framework to manage people, processes, and technology will fall short of its optimal value to an organization. Businesses must understand the differences between these two terms and understand their synergistic relationship.

With data governance, you’re implementing a framework that helps data users understand and transform data assets in the data catalog into valuable information that powers business outcomes. Data governance enables you to:

- Identify the data stewards/owners responsible and accountable for the data’s origin, definition, business attributes, relationships, and dependencies to build a common understanding across teams

- Provide user-friendly options that encourage teamwork and collaboration to synthesize all the technical and business details surrounding your organization’s data assets across multiple users in different departments.

- Document how data assets relate to KPI’s and business objectives across all data users and consumers in the organization – seamlessly bringing visibility to the actual business value

Look to the future with a next-generation data catalog

If data users aren’t able to find, understand, and trust all critical data, then valuable information – and time – is lost in simply attempting to locate, aggregate, interpret, and supplement raw data.

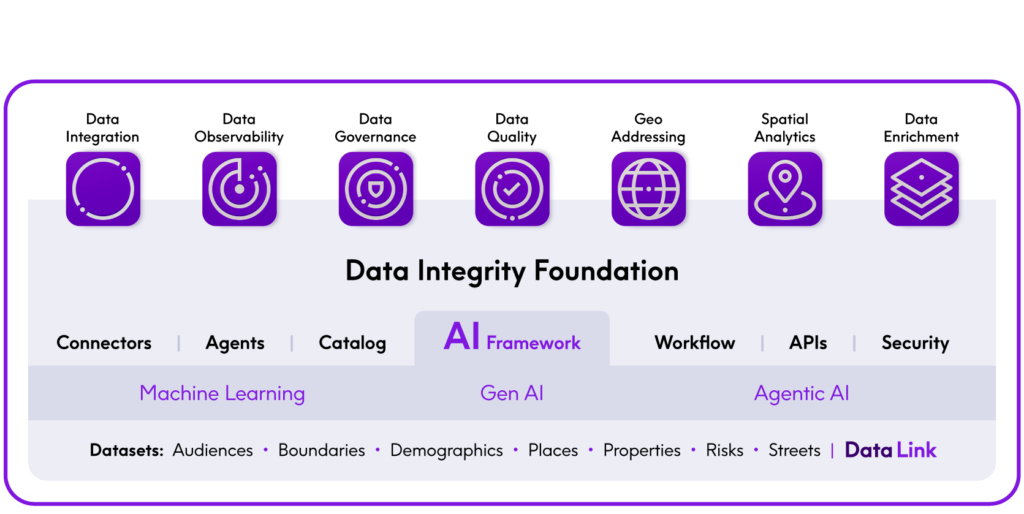

But what if you could unite these capabilities in a modular, and interoperable SaaS suite with a common set of services and a business-friendly user experience? That’s where the Precisely Data Integrity Suite changes the game. All user journeys start from the data catalog that inventories metadata from all seven services in the Suite. With this innovative approach, your team can discover, understand, trust, and enrich data all from a single interface and user experience to expedite business outcomes and analytics. Think of this as your one-stop shop for trusted data.

Easily addressing new use cases through a shared data catalog is cost-effective, easy to deploy, and highly scalable. The service design means you can consume the services you need, when you need them. And while each best-in-class service delivers tremendous value, what makes the suite truly unique and innovative is the Data Integrity Foundation – connecting all services through the data catalog.

Deliver trusted data to your business with the Data Integrity Suite

Precisely is here to help

Data catalog that addresses your unique needs

Whether you‘re looking for a data catalog that’s stand-alone, powered with robust data governance capabilities, or the bedrock of the innovative Data Integrity Suite, Precisely provides a business-friendly data catalog that enables teams to quickly discover, understand, and leverage critical data that impact business outcomes.

Explore how we can help you adapt to the use cases that are the most important drivers for your company.